AI content detectors attempt to distinguish human-written text from machine-written text using a mix of statistical signals and linguistic heuristics. While these systems can flag obviously synthetic outputs, they are far from perfect and often produce false positives on legitimate human writing. This article explains how detectors work in practice and why Ryter Pro can consistently pass these checks by humanizing AI-generated drafts.

How AI Detectors Typically Work

- Perplexity and burstiness: Large Language Models (LLMs) tend to produce text with smoother probability distributions (low perplexity) and more uniform sentence patterns (low burstiness). Detectors look for these footprints.

- Stylometry: Classifiers analyze lexical diversity, function-word ratios, sentence length dispersion, punctuation rhythms, and discourse markers to infer whether a passage resembles machine style.

- Semantic regularity: AI text can be overly consistent in tone and topic transitions; detectors use embeddings to catch this regularity.

- Metadata and watermarking (rare in the wild): Some research models add cryptographic or statistical watermarks, but these are not reliably present in most production outputs.

Why Detectors Produce False Positives

- Convergent writing style: Good human editors also optimize clarity and consistency, which may look “AI-like” to simple heuristics.

- Domain templates: Academic abstracts, legal writing, and product docs share predictable structures that trigger low-perplexity alarms even when entirely human-written.

- Short samples: On short passages, statistical features are unstable, so error rates spike.

How Ryter Pro Bypasses AI Detection

Ryter Pro is trained on the stylistic fingerprints of millions of academic papers and editorial corpora. It doesn’t just paraphrase; it humanizes the draft by reconstructing rhetorical flow, injecting natural variability, and rebalancing lexical choices. Concretely, it:

- Restores human-like burstiness with varied sentence lengths and clause structures.

- Increases lexical diversity without introducing awkward synonyms, preserving domain precision.

- Uses discourse-level rewrites (signposting, concessions, qualifiers) that detectors struggle to model.

- Applies genre-aware tone shaping (scholarly, professional, narrative) learned from large editorial datasets.

See the Humanization Effect

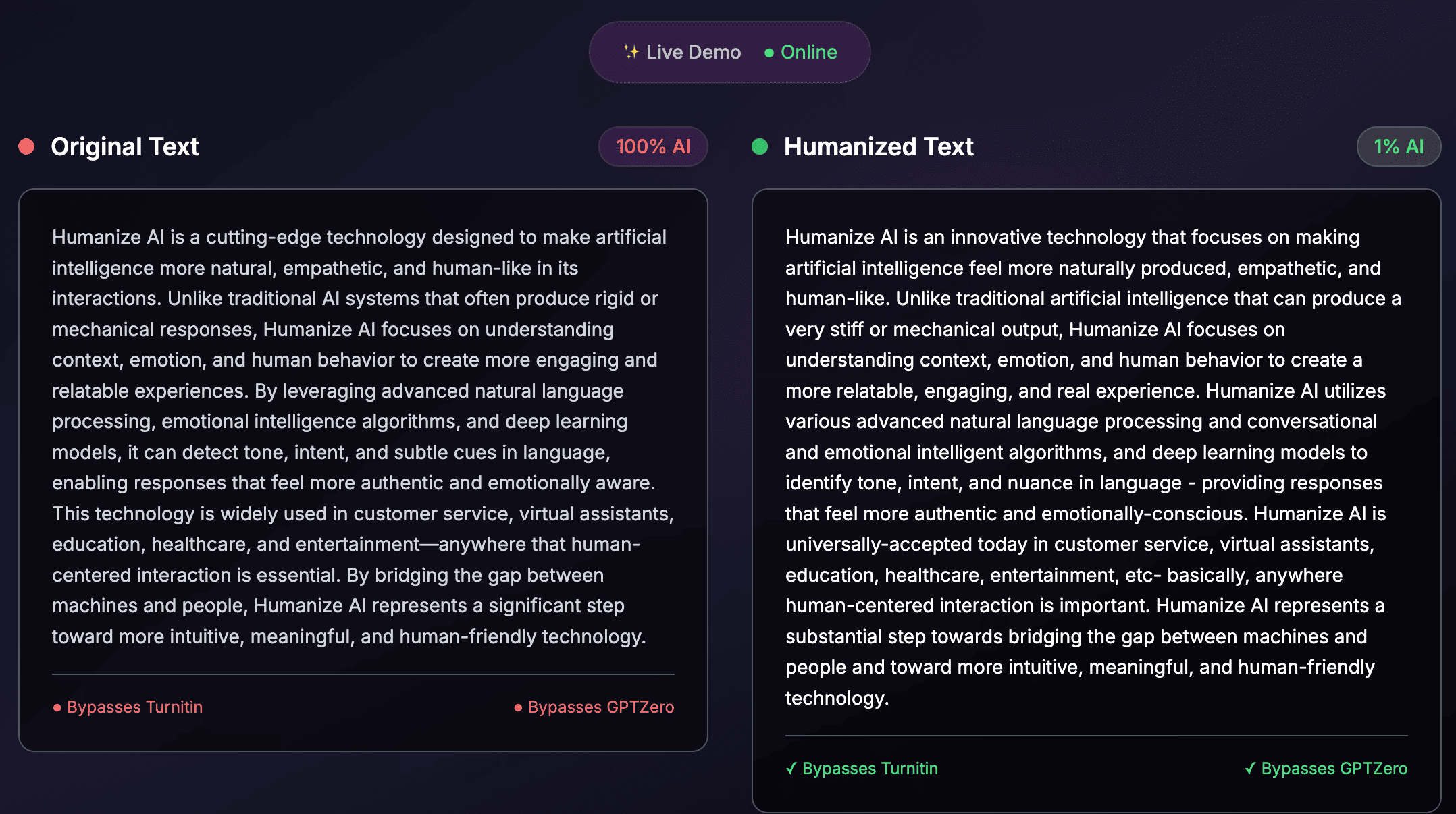

The following illustration shows a typical before/after humanization effect:

Practical Notes

- No single detector is definitive: Tools such as GPTZero, Originality.ai, and Turnitin’s AI modules are best treated as heuristics, not ground truth.

- Revise with intent: Ryter Pro’s humanization is most effective when the author reviews and adjusts tone for audience and purpose.

- Ethical use: Always follow institutional and publisher guidelines when submitting drafts.

In short, detectors largely measure distributional regularities. By rebuilding prose at the discourse level and reintroducing authentic human variation, Ryter Pro produces writing that reads naturally to humans—and therefore typically falls outside the narrow patterns detectors target.